Extreme Programming (XP) is a prominent, disciplined Agile software development framework designed to improve software quality and responsiveness to changing customer requirements. Developed by Kent Beck in the mid-1990s, it focuses on taking beneficial engineering practices—such as pair programming, testing, and continuous integration—to “extreme” levels.

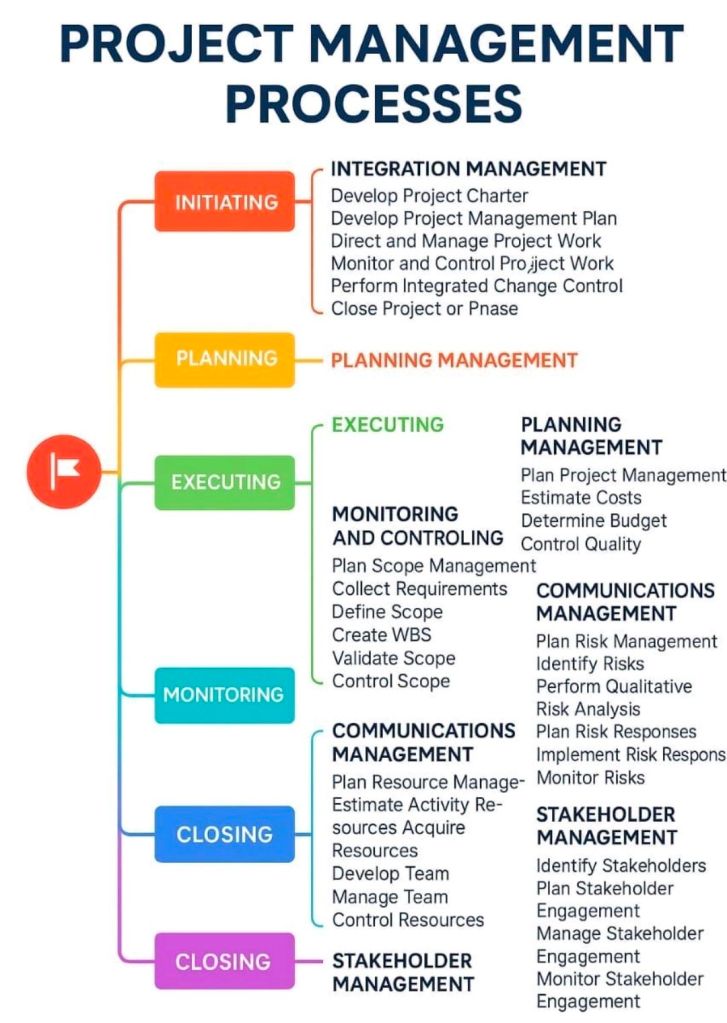

Project Management Summary: Core XP Components

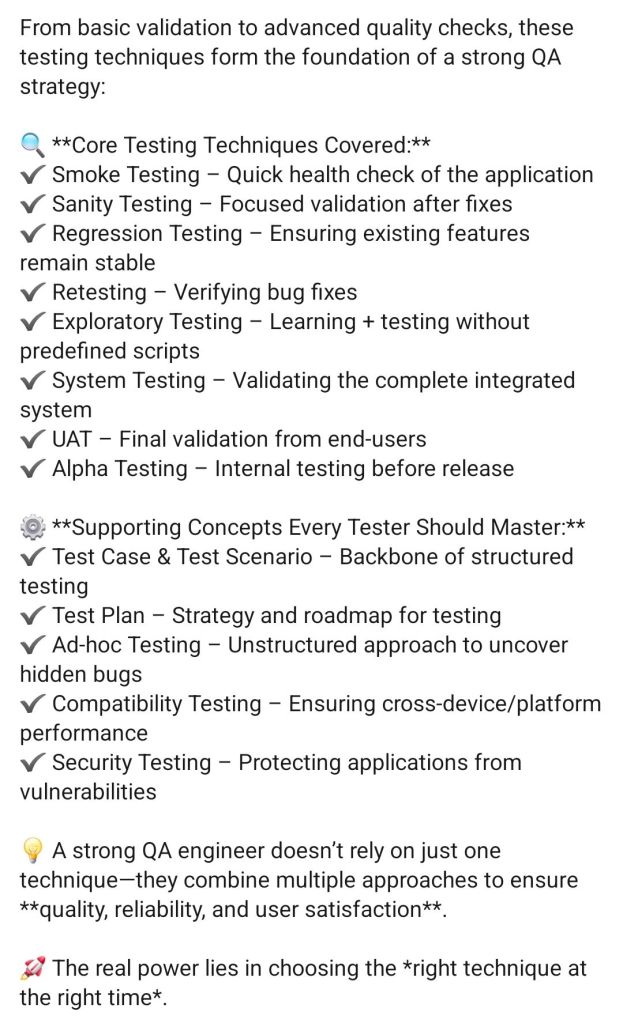

XP differs from other Agile methods by focusing intensely on technical engineering practices alongside project management techniques.

- Core Values: Communication, Simplicity, Feedback, Courage, and Respect.

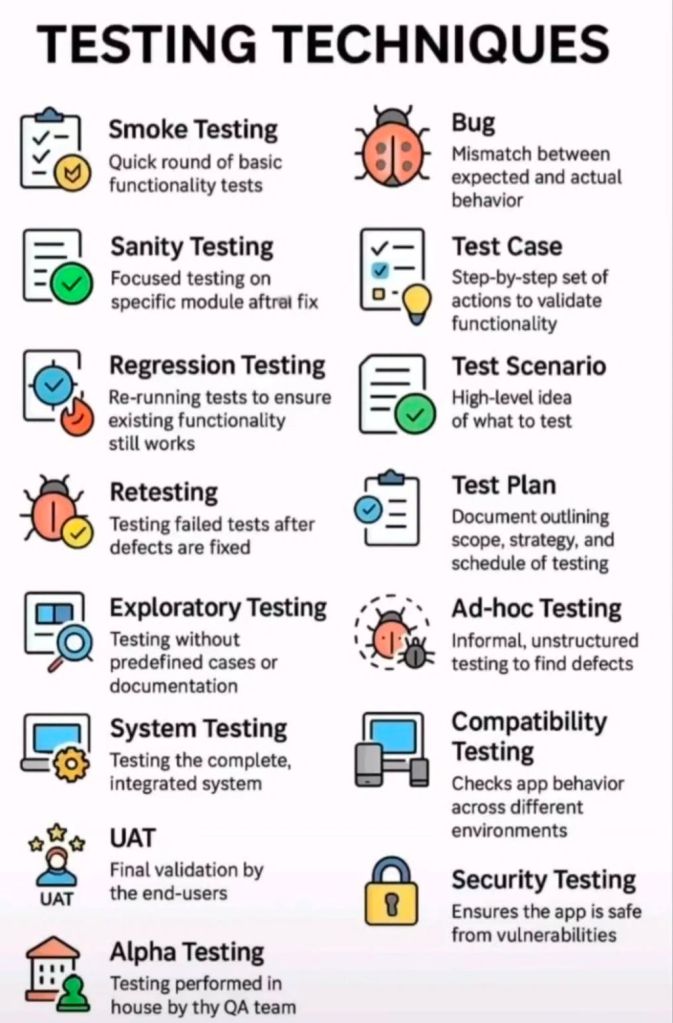

- Key Practices: Pair Programming, Test-Driven Development (TDD), Collective Ownership, Continuous Integration, Refactoring, and Small Releases.

- Project Management Focus:

- The Planning Game: Combines business priorities with technical estimates to determine what to build next.

- Small Releases: Frequent, working software releases (often 1–2 weeks) to gather rapid customer feedback.

- On-site Customer: A customer representative works with the team to provide instant feedback and clarify requirements.

- Sustainable Pace: Limiting work weeks to 40 hours to avoid burnout and maintain quality.

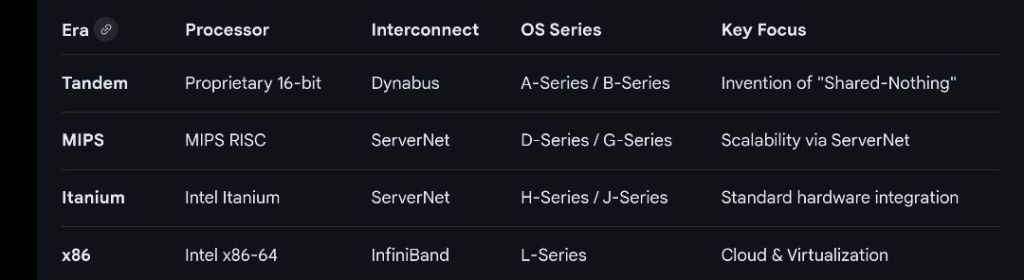

Detailed Historical Timeline of XP

Era 1: Origins and The Chrysler C3 Project (1993–1996)

- 1993: Chrysler launches the Comprehensive Compensation System (C3) project to upgrade payroll software, which struggles for years.

- March 1996: Kent Beck is brought in to lead the C3 project. To salvage the project, Beck starts encouraging team members to adopt a set of technical practices he developed based on his experiences.

- 1996: Ward Cunningham heavily influences the development of early XP concepts, particularly the “metaphor”.

- 1996: The project begins adopting daily meetings, pair programming, and TDD.

Era 2: Formalization and “Embracing Change” (1997–2000)

- 1997: Ron Jeffries is brought in to coach the C3 team, helping solidify the practices.

- 1998: The term “Extreme Programming” becomes widely discussed within the Smalltalk and Object-Oriented programming communities.

- October 1999: Kent Beck publishes Extreme Programming Explained: Embrace Change, formally defining the framework.

- February 2000: Daimler-Benz acquires Chrysler and cancels the C3 project after 7 years of work. Despite cancellation, the methodology proved that it could produce working, high-quality software, just not fast enough to overcome the legacy backlog.

Era 3: Rise of Agile and Expansion (2001–2005)

- February 2001: Kent Beck and Ron Jeffries are among the 17 developers who draft the Manifesto for Agile Software Development at Snowbird, Utah. XP is recognized as one of the foundational “Agile” methods.

- 2001: The first Agile Alliance conference is held. XP is considered the dominant agile methodology during this period.

- 2002–2003: XP gains global popularity; numerous books are published expanding on the core 12 practices.

- 2004: The second edition of Extreme Programming Explained is released, shifting focus from 12 rigid practices to more adaptive principles.

Era 4: Integration with DevOps and Continuous Delivery (2006–Present)

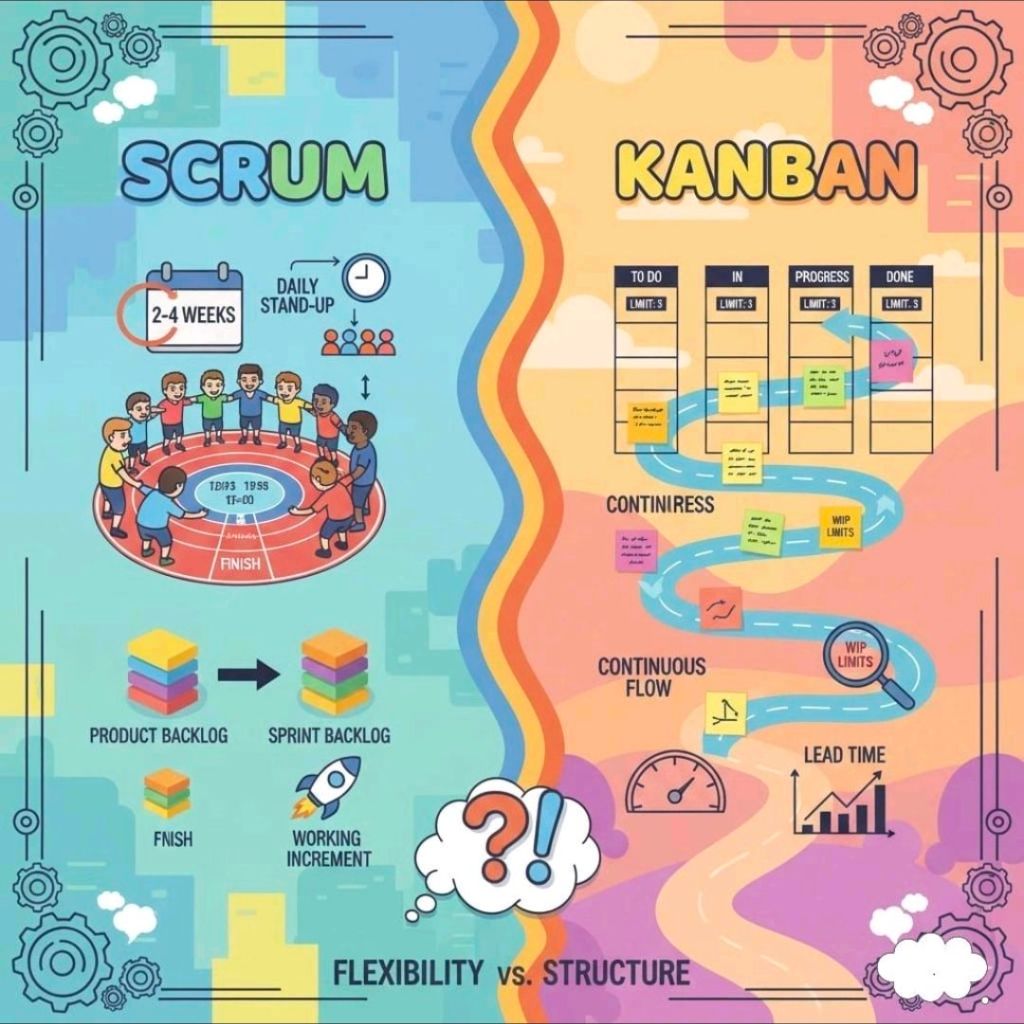

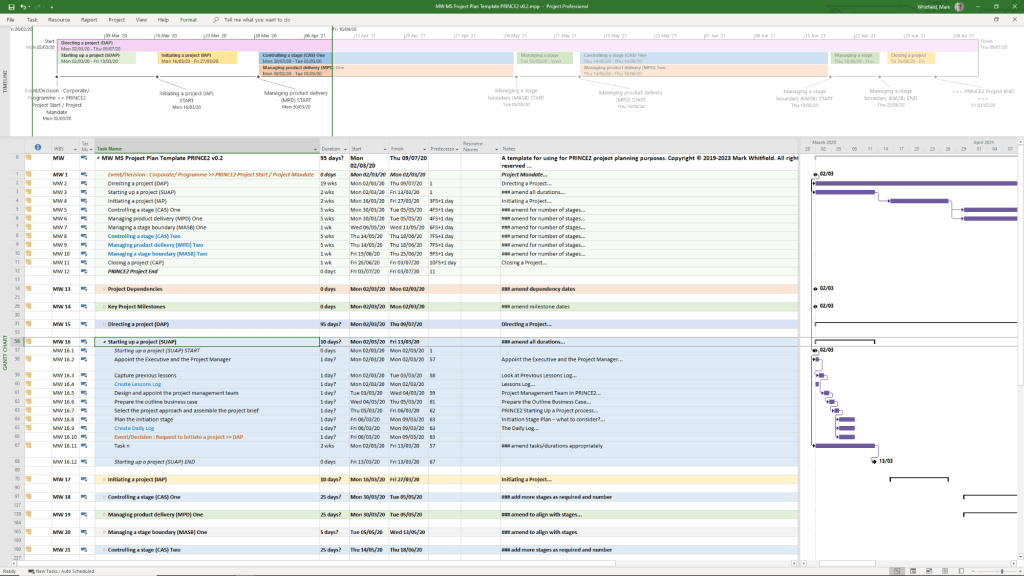

- 2006-2010: As Scrum gains popularity for general project management, XP practices like TDD and Pair Programming become the “standard” technical practices for high-performing teams, often blended with Scrum (ScrumXP).

- 2010s: The rise of DevOps and continuous delivery, which inherently requires XP practices like CI/CD (Continuous Integration/Continuous Delivery).

- 2020-2026: While fewer companies identify strictly as doing “XP,” its technical practices are considered essential to modern software development and are integrated into almost all Agile methodologies to ensure quality and speed.

Extreme Programming XP project management summary and detailed historical timeline by era and year