Mark Whitfield provides a comprehensive suite of over 200 editable project management templates designed for Agile, Waterfall, and PRINCE2 methodologies. These tools are based on his 30+ years of project delivery experience in high-stakes sectors like banking and aerospace.

Overview of Project Management Templates

Whitfield’s collection, available on his official website and Etsy, includes specialized tools for various delivery phases:

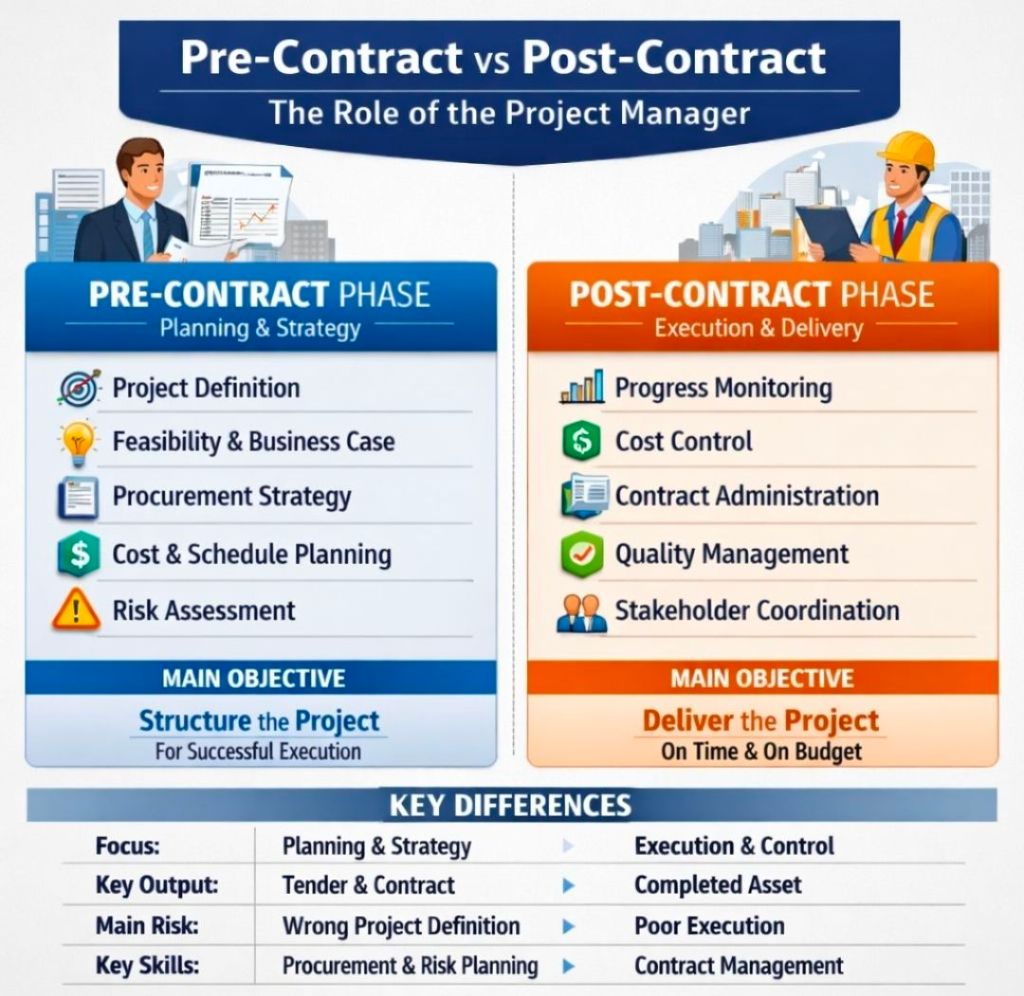

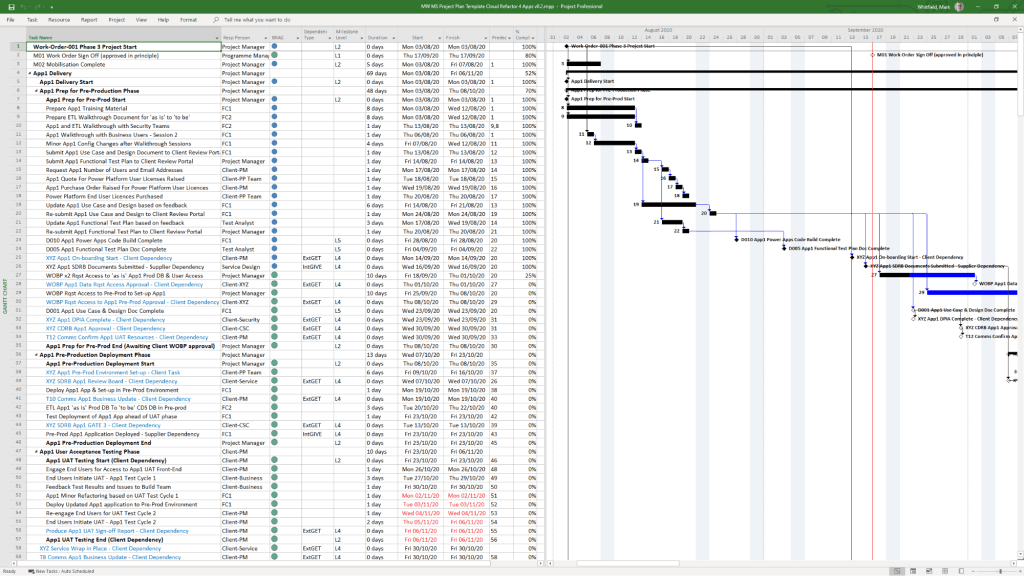

- Planning & Scheduling: Includes Plan on a Page (POaP) (30+ PowerPoint examples for executive summaries), detailed MS Project (MPP) plans, and Excel-based Gantt charts for those without MS Project licenses.

- Tracking & Control: RAID Logs (Risks, Actions, Issues, Dependencies/Decisions) with built-in charts, and RACI Trackers for defining roles and responsibilities.

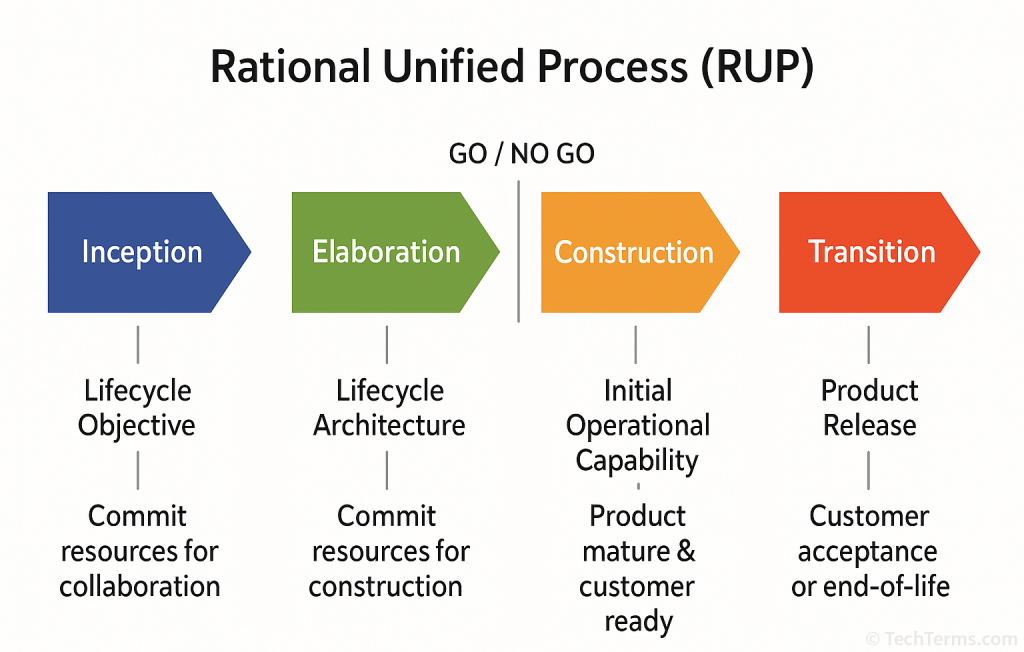

- Methodology-Specific Tools:

- PRINCE2: Full 7th Edition MS Project plans and standard Word templates.

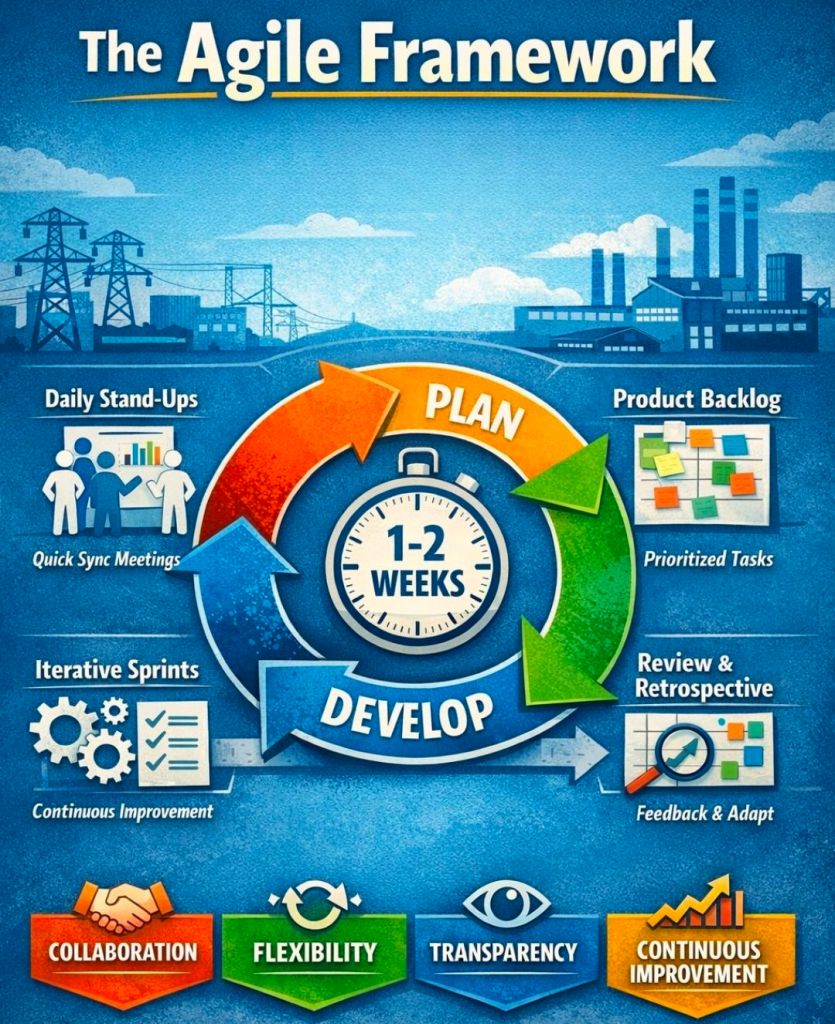

- Agile/Scrum: Agile burn-down and burn-up charts, story dependency trackers, and sprint overview templates.

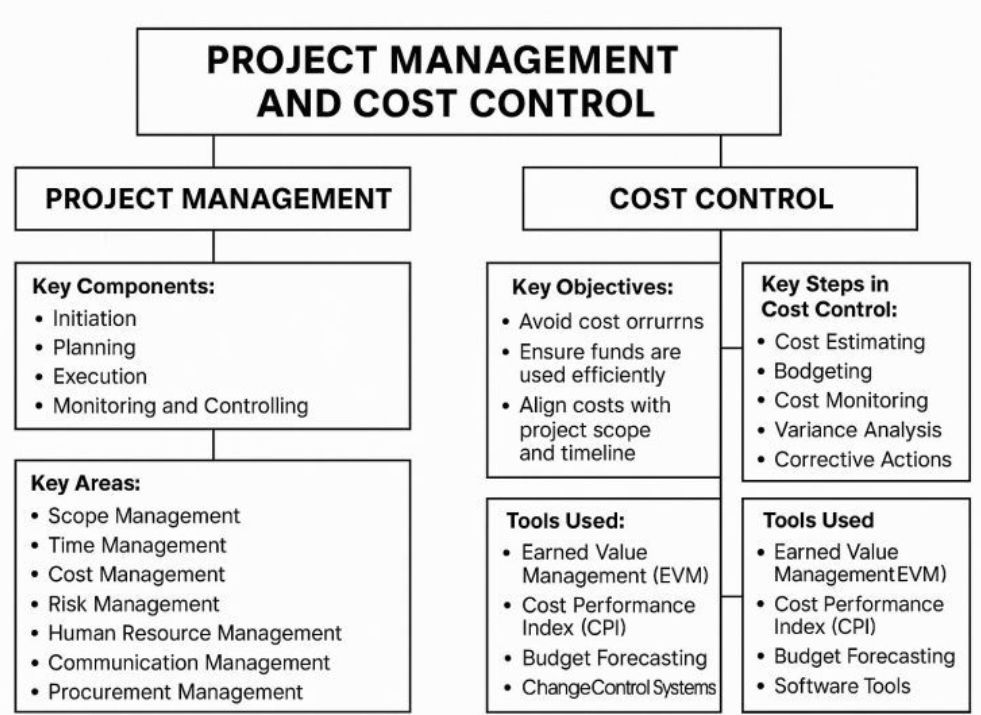

- Financial Management: Detailed trackers for budgets, forecasts, actuals, margins, and resource costing per project phase.

- Reporting & Governance: Weekly/monthly status report templates (Word and PowerPoint), project organization charts, stakeholder analysis plans, and meeting minutes.

- Delivery & Mobilization: Onboarding kits, deployment runbooks, and Statement of Work (SOW) guidance for both Agile and Waterfall.

Historical Career Timeline

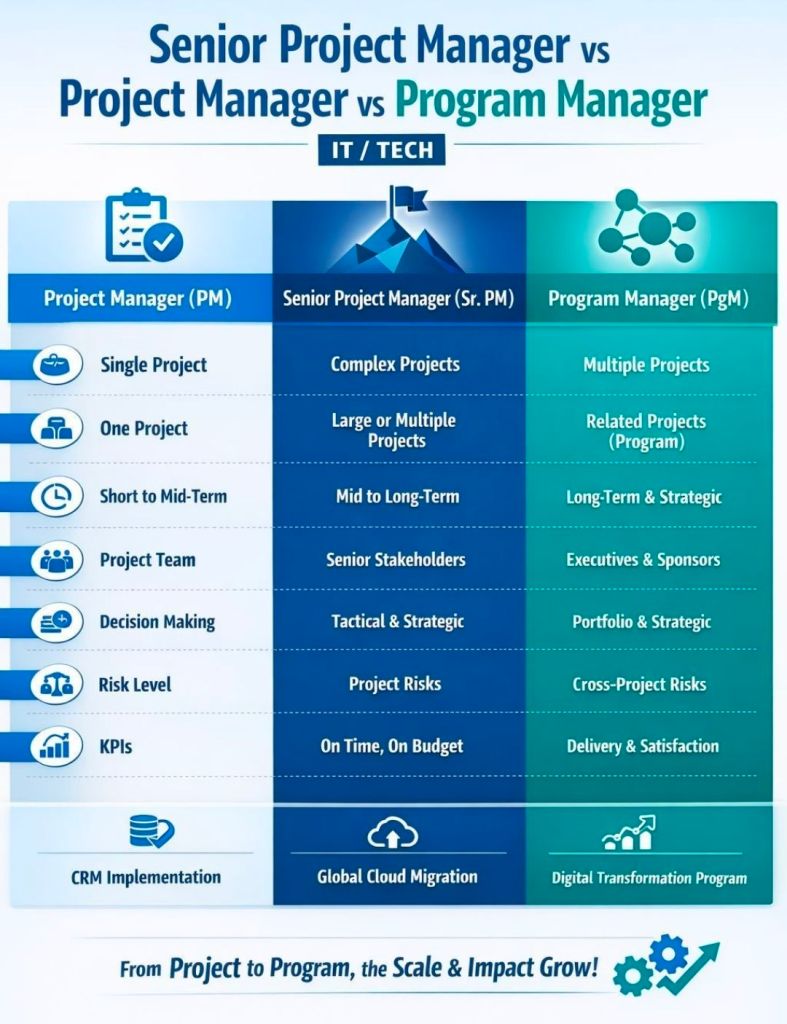

Mark Whitfield’s template development is rooted in a career that evolved from technical programming to senior engagement management.

- 1990–1995: The Software Partnership / Deluxe Data: Started as a programmer specializing in electronic banking software for Tandem Computers (HPE NonStop).

- 1995–2013: Insider Technologies (18 years):

- 1997: Consultant at CRESTCo (now Euroclear) for volume testing and performance benchmarking.

- 2002: Managed the first HP OpenView Operations 2-way Smart Plug-In certification for the NonStop platform.

- Early 2000s: Transitioned to IT Project Manager, managing waterfall projects for real-time log extraction (RTLX) products for clients like HSBC.

- Late 2000s–2013: Senior roles in product and project management, managing large-scale transaction monitoring for global banks.

- 2013–2014: Wincor Nixdorf: Served as a Project Manager for the Banking Division, managing a £5m+ project for Lloyds Banking Group (LBG) to replace legacy software across their ATM estate.

- 2014–2016: Betfred: Senior IT Digital Project Manager in the Online and Mobile Division, delivering projects using the Agile Scrum framework.

- 2016–Present: Capgemini UK:

- 2016: Lead Project Manager for a UK Air Traffic organization, delivering iOS apps for airspace visualization.

- 2023–2024: Technical Delivery Manager for a £1m+ UK Government project involving fish export and health document portals.

- Current: Serving as an Engagement Manager (Certified PRINCE2 Practitioner and Agile SCRUM) augmented into MuleSoft.

Project Management Templates Overview and Author Timeline