Pascal is a historically significant imperative and procedural programming language designed by Niklaus Wirth between 1968 and 1969. It was created to encourage structured programming and efficient data structuring, serving as a clean, disciplined alternative to more complex languages of the time like ALGOL 60 and FORTRAN.

Key Features and Overview

- Strong Typing: Every variable must have a defined type (e.g., Integer, Real, Boolean, Char), and the compiler strictly enforces these to prevent errors during execution.

- Rich Data Structures: Pascal introduced built-in support for complex types including records, sets, enumerations, subranges, and pointers.

- Structured Control: It uses clear, English-like keywords such as

begin,end,if-then-else, andwhileto organize program logic into manageable blocks. - Educational Focus: Originally intended as a teaching tool, it became the global standard for introductory computer science courses for nearly two decades.

Historical Timeline of Pascal

The Foundation Era (1960s)

- 1964–1966: Niklaus Wirth joins the IFIP Working Group to design a successor to ALGOL 60. His “pragmatic” proposal is rejected in favour of the more complex ALGOL 68.

- 1966: Wirth implements his proposal at Stanford as ALGOL W, which introduces many concepts later found in Pascal.

- 1968: Wirth begins designing a new language at ETH Zurich, naming it Pascal after the 17th-century mathematician Blaise Pascal.

The Emergence Era (1970–1979)

- 1970: The first Pascal compiler becomes operational on the CDC 6000 mainframe, and the official language definition is published.

- 1971: Formal announcement of Pascal appears in Communications of the ACM.

- 1972: The first successful port to another system (ICL 1900) is completed by Welsh and Quinn.

- 1973: The Pascal-P kit (P-code) is released, providing a portable intermediate code that allows Pascal to be easily ported to different hardware.

- 1975: The UCSD Pascal system is developed at the University of California, San Diego, eventually bringing the language to microcomputers like the Apple II.

- 1979: Apple releases Apple Pascal, licensing the UCSD p-System for its platforms.

The Dominance Era (1980–1989)

- 1983: ISO 7185:1983 is published, establishing the first international standard for Pascal.

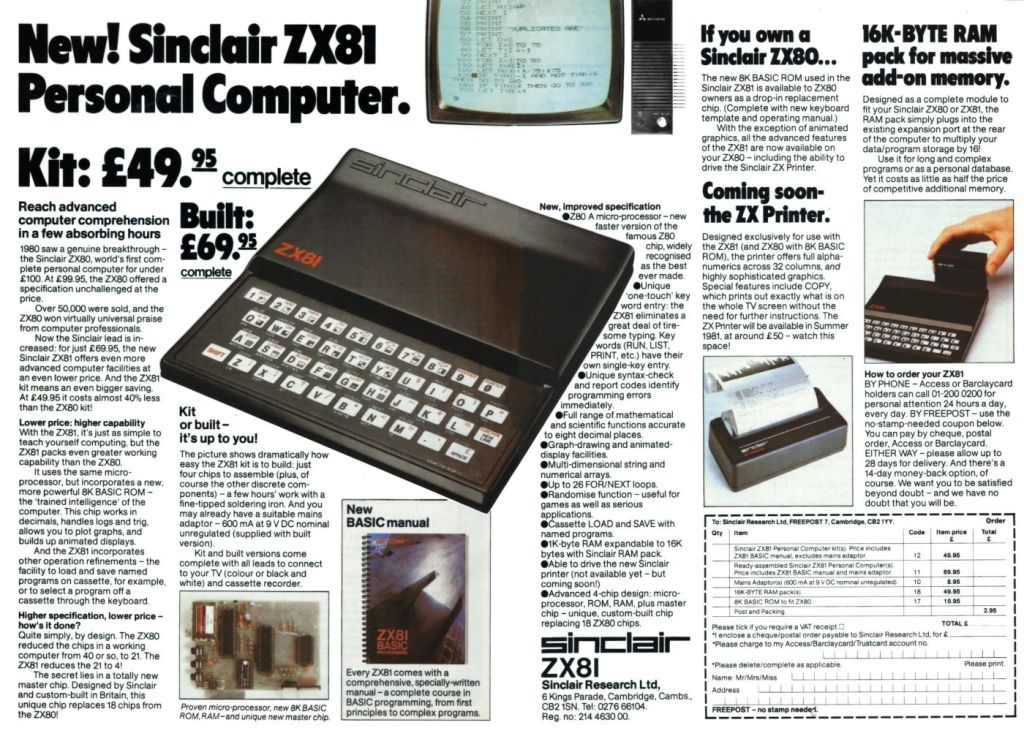

- 1983: Borland International releases Turbo Pascal 1.0. Priced at $49.95, its extreme speed and integrated environment revolutionize PC programming.

- 1984: The Educational Testing Service (ETS) adopts Pascal as the official language for the AP Computer Science exam in the U.S..

- 1985: Apple introduces Object Pascal on the Macintosh to support object-oriented programming.

- 1989: Borland adds object-oriented features to Turbo Pascal 5.5, adopting the Apple Object Pascal extensions.

The Transition and Legacy Era (1990–Present)

- 1990: The Extended Pascal standard (ISO/IEC 10206) is released, adding modularity and separate compilation.

- 1995: Borland releases Delphi, a Rapid Application Development (RAD) tool based on Object Pascal, designed for the Windows graphical interface.

- 1997: The open-source Free Pascal compiler (originally FPK Pascal) emerges to provide a cross-platform alternative to commercial tools.

- 1999: Pascal is replaced by C++ as the official language for the AP Computer Science exam, marking the end of its educational dominance.

- Present: Pascal remains active through projects like Lazarus (an open-source IDE for Free Pascal) and continued updates to Embarcadero Delphi for Windows, macOS, Android, and iOS development.

Pascal is a historically significant, high-level, and statically typed programming language designed in the late 1960s by Niklaus Wirth. Its primary technical goal was to encourage structured programming—a disciplined approach that uses clear, logical sequences and data structuring to make code more readable and reliable.

Technical Insights

The technical architecture of Pascal is built on a few core pillars that distinguish it from its contemporaries like C or FORTRAN:

- Strong Typing: Unlike many early languages, Pascal is strongly typed, meaning data types cannot be mixed or converted without explicit instruction. This reduces runtime errors by catching type mismatches during compilation.

- Block-Structured Design: Programs are organized into clear blocks (using

BEGINandEND), including nested procedures and functions. This hierarchical structure allows for precise control over variable scope. - Unique Data Structures: Pascal introduced native support for sets (representing mathematical sets as bit vectors) and variant records, which allow different fields to overlap in memory to save space.

- One-Pass Compilation: The strict ordering of declarations (constants, then types, then variables, then procedures) was originally designed to allow the compiler to process the entire program in a single pass.

General Programming Approach

Pascal enforces a “think before you code” philosophy through its rigid syntax and organizational requirements:

- Top-Down Design: The language encourages breaking complex problems into smaller, manageable sub-tasks (procedures and functions).

- Explicit Declarations: Every variable must be declared in a specific

VARsection before the executable code begins. This prevents the “spaghetti code” common in earlier languages. - Algorithmic Focus: Because the syntax is so close to pseudo-code, the approach focuses heavily on the logic of the algorithm rather than language-specific “tricks”.

- Parameter Passing Control: Developers have explicit control over how data moves; using the

VARkeyword allows passing by reference (modifying the original variable), while omitting it passes by value (working on a copy).

Modern Relevance

While its peak in education was the 1980s and 90s, Pascal evolved into Object Pascal, which powers modern tools:

- Delphi: A popular IDE by Embarcadero Technologies used for rapid application development (RAD) on Windows, macOS, and mobile.

- Free Pascal (FPC) & Lazarus: Open-source alternatives that bring modern features like generics and anonymous methods to the language.