The HPE NonStop Data Definition Language (DDL) dictionary is a specialized subsystem used to define and manage data objects for Enscribe files and translate those definitions into source code for various programming languages. It serves as a central repository for metadata, ensuring consistent data structures across applications written in C, COBOL, TAL, or TACL.

Program Summary

The DDL dictionary program functions as a metadata management tool. Key capabilities include:

- Centralised Definition: Defines records, fields, and file attributes in a hierarchical structure.

- Code Generation: Translates DDL definitions into language-specific source code (e.g., COBOL copybooks or C headers).

- Dictionary Maintenance: Allows users to create, examine, and update dictionaries to reflect changes in data structures.

- Interoperability: Modern tools like Ddl2Bean convert dictionary files into Java Beans or XML, enabling cross-language and cross-platform use.

Future Outlook

The future of HPE NonStop DDL focuses on modernisation and integration rather than replacement.

- Data Virtualization: Integration with AI factories and object storage platforms to expose legacy metadata in open-table formats like Apache Iceberg.

- API Centricity: Enhancements to the NonStop API Gateway will likely use DDL metadata to automate REST/JSON service orchestration.

- Real-time Analytics: Native streaming of NonStop data into platforms like Kafka, using DDL definitions to map real-time changes into analytics-ready formats.

Internet Links & Manuals

- Primary Reference: HPE Data Definition Language (DDL) Reference Manual

- Java Integration: DDL to Java Bean (NSJI) Programmer’s Reference

- Modern Tools: HPE Shadowbase DDL Transformation Guide

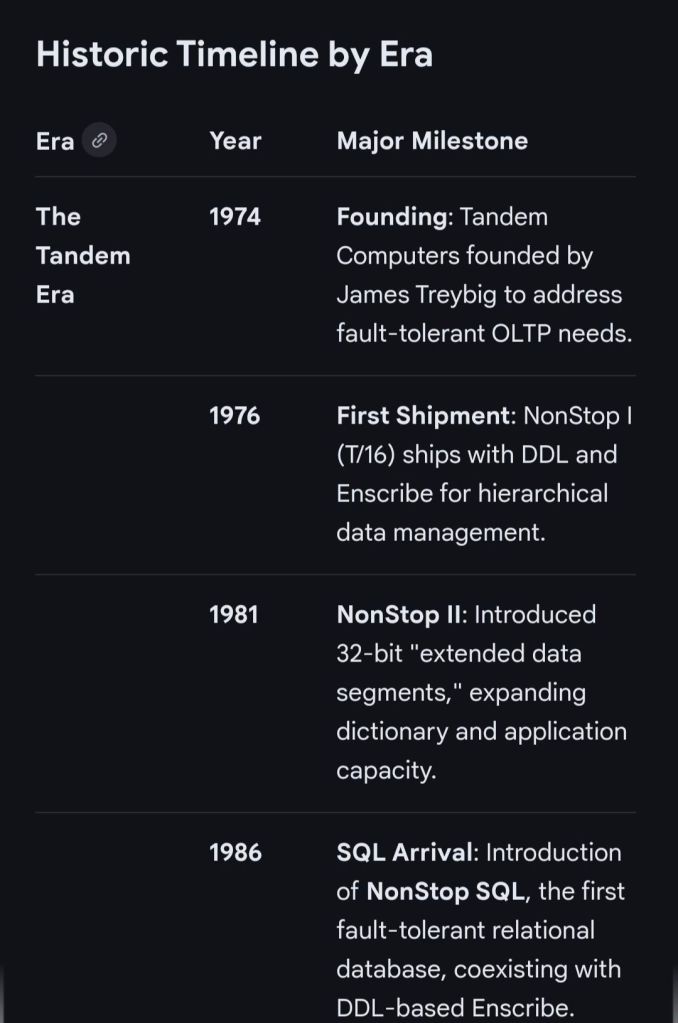

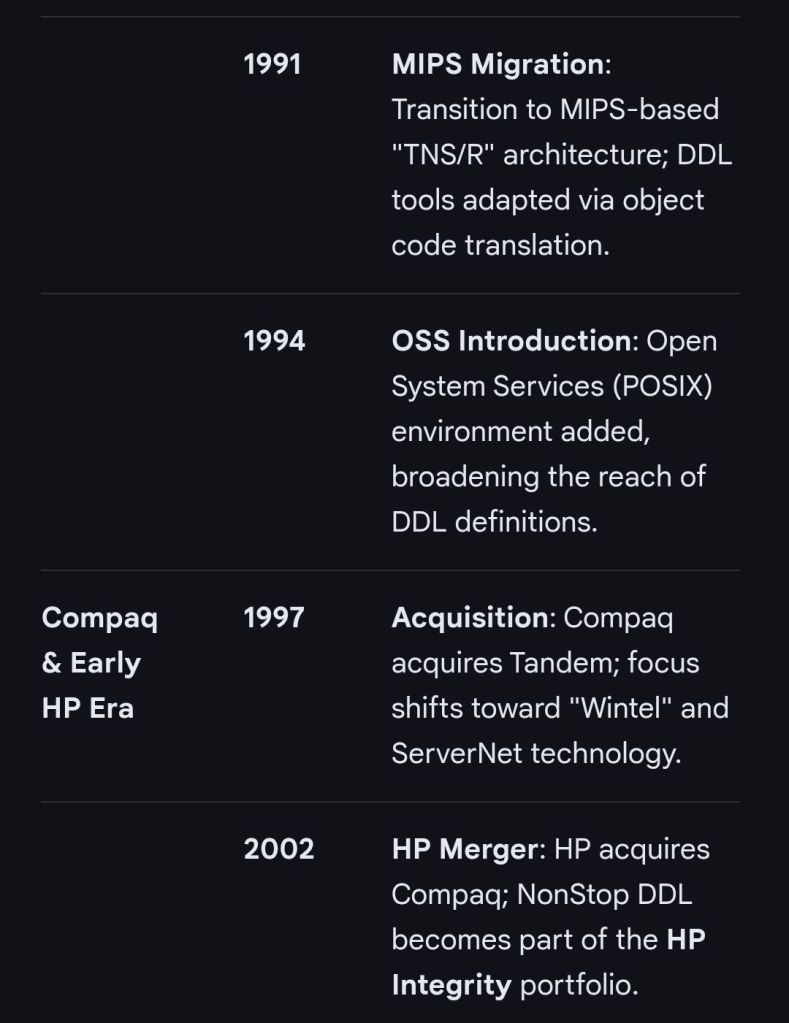

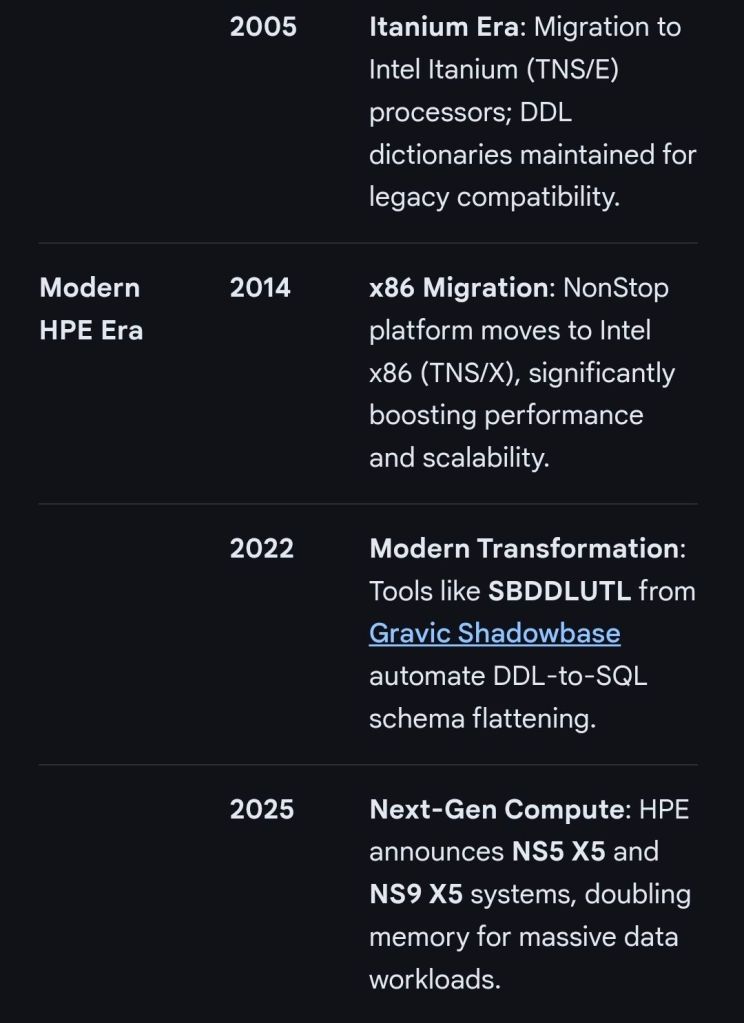

HPE NonStop Data Definition Language (DDL) dictionary overview and timeline